|

Timing is Everything: Temporal Scaffolding of Semantic Surprise in Humor

Yuxi Ma*,

Yongqian Peng*,

Junchen Lyu,

Chi Zhang✉️,

Yixin Zhu✉️

In CogSci, 2026 (Talk)

[Abs]

[Paper]

[Project]

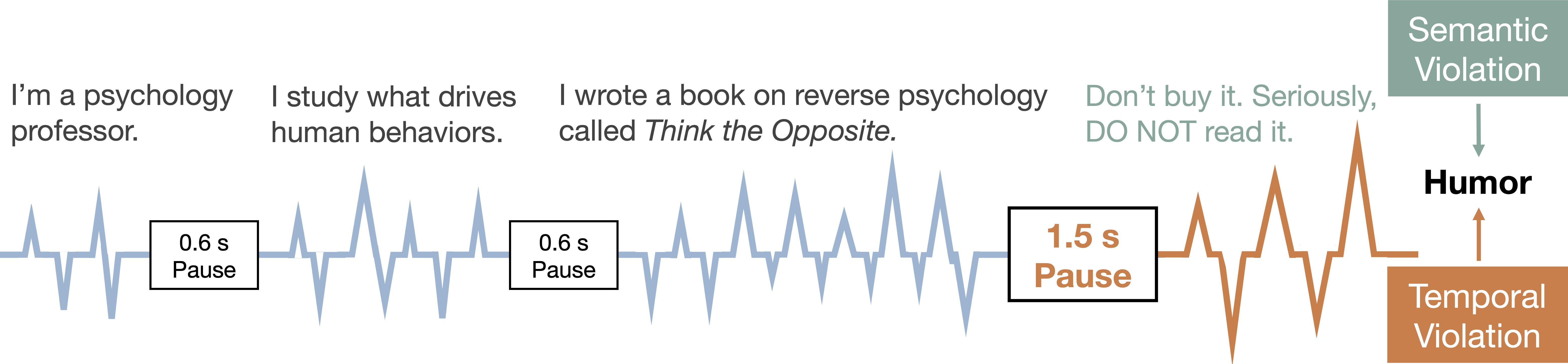

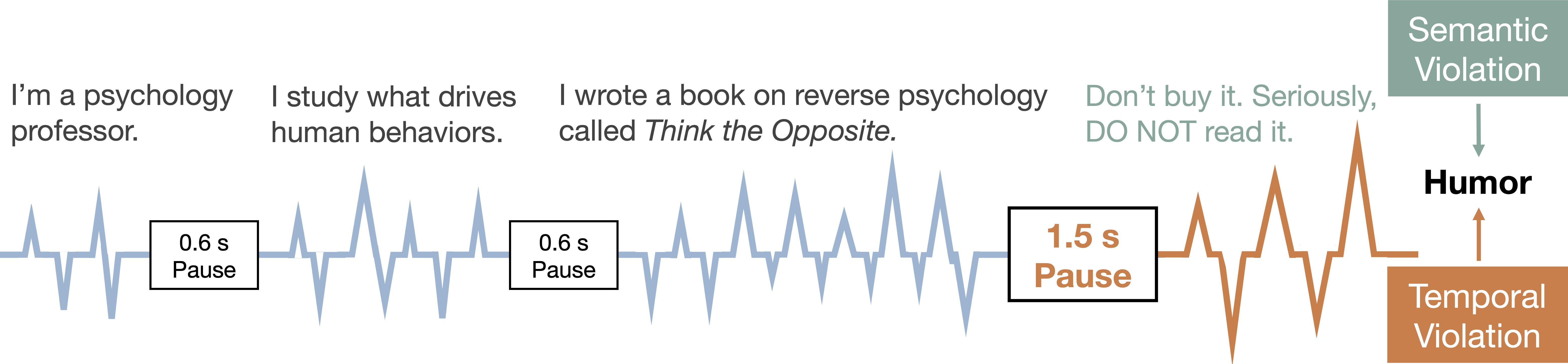

Humor is a fundamental cognitive phenomenon in which humans derive pleasure from the expectation violations and their resolution, exemplifying the brain’s dynamic capacity for predictive processing. Classical humor theories emphasize semantic incongruity as the primary driver of amusement, yet overlook temporal dynamics despite comedians' intuition that ``timing is everything.'' The extent to which temporal structure contributes to humor appreciation and how it interacts with semantic content remains poorly understood. Here, we propose the Dual Prediction Violation (DPV) framework to capture the interplay between content and timing. By analyzing 828 professional Chinese stand-up performances, we show that temporal features substantially outweigh semantic incongruity in predicting audience appreciation. Specifically, we find that peak semantic violations matter more than average incongruity levels, and pauses systematically lengthen before high-surprise punchlines—a strategic coupling that distinguishes successful from unsuccessful performances. These findings reframe humor as temporally scaffolded, where timing and semantic content operate in strategic coordination rather than independently. Our DPV framework bridges humor theory with predictive processing, demonstrating that temporal structure plays a central role in naturalistic humor appreciation with implications for understanding multi-scale prediction integration in linguistic processing.

tl;dr: By analyzing 828 stand-up routines, we show that a comedian's timing matters more for getting laughs than the actual jokes. Experts deliberately pause longer right before big punchlines to build anticipation, proving that when you deliver a joke is just as important as what you say.

|

|

Rational Communication Shapes Morphological Composition

Fengyuan Yang,

Yongqian Peng,

Yuxi Ma,

Chenheng Xu,

Yixin Zhu✉️

In CogSci, 2026

[Abs]

[Paper]

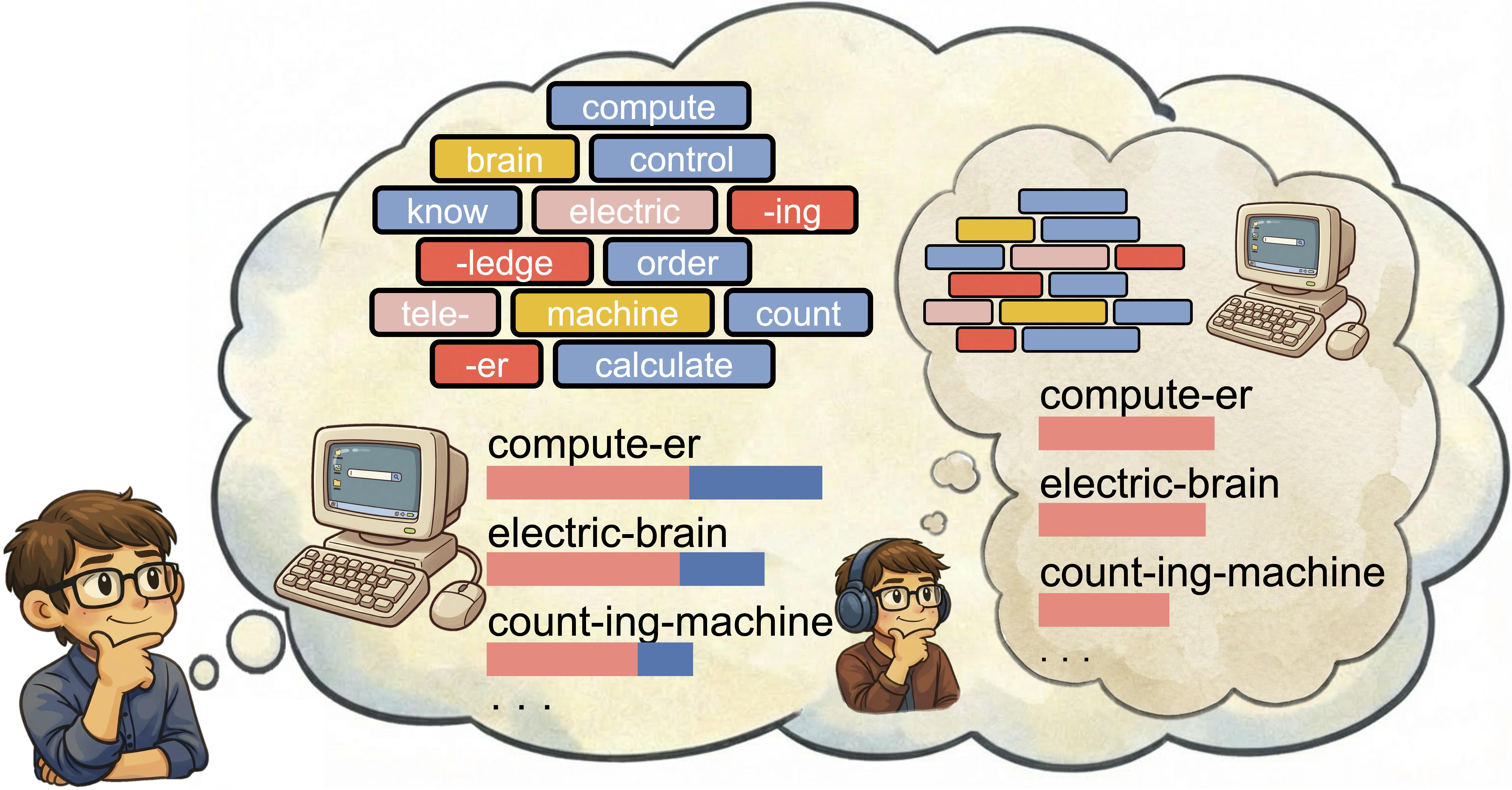

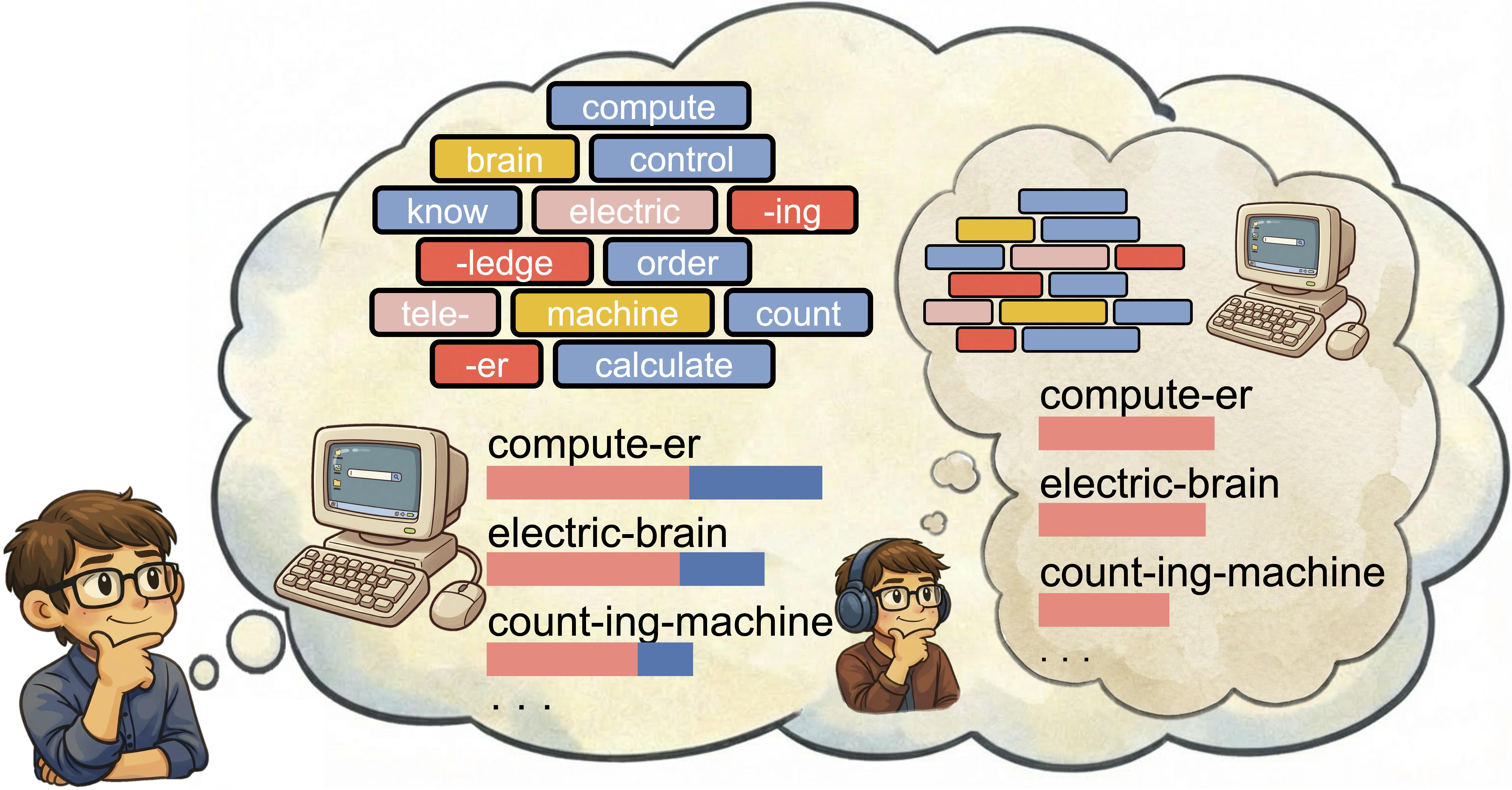

Human languages expand vocabularies by combining existing morphemes rather than inventing arbitrary forms. Communicative efficiency shapes lexical systems at multiple levels (Gibson et al., 2019), yet morphological composition—combining morphemes through compounding or affixation—has rarely been modeled as a historically situated speaker choice among competing morpheme sequences, leaving unanswered why a language settles on one morpheme combination over other plausible alternatives. We ask whether a trade-off between listener recoverability and speaker production cost can predict attested compositions over contemporaneously available alternatives. Here we show, within the Rational Speech Act (RSA) framework (Frank & Goodman, 2012; Goodman & Frank, 2016) using a time-indexed lexicon constructed from Corpus of Historical American English (COHA) and Corpus of Contemporary American English (COCA), that across 4323 naturally occurring English compounds and derivations spanning 1820--2019, attested compositions are systematically ranked above unattested alternatives generated from contemporaneously available morphemes. Models integrating semantic informativeness with production cost outperform semantic-only and cost-only baselines on Mean Reciprocal Rank (MRR) and top-k accuracy (Acc@k), with the advantage of Pragmatic Speaker model (𝑆1) over the semantic-only baseline growing as the candidate set expands, where meaning alone leaves morphological choice underdetermined. These findings suggest that lexicalization reflects a communicative trade-off between expressiveness and efficiency, extending rational accounts of communication from utterance-level choice to the internal structure of words.

tl;dr: New words aren't coined arbitrarily—languages pick morpheme combinations that balance listener comprehension and speaker effort. We model that trade-off with RSA and shows it predicts which English word forms historically won out.

|

|

NarrativeLoom: Enhancing Creative Storytelling through Multi-Persona Collaborative Improvisation

Yuxi Ma*,

Yongqian Peng*,

Fengyuan Yang,

Siyu Zha,

Chi Zhang,

Zixia Jia,

Zilong Zheng✉️,

Yixin Zhu✉️

In CHI, 2026 (Long Paper)

[Abs]

[Paper]

[Project]

[Video]

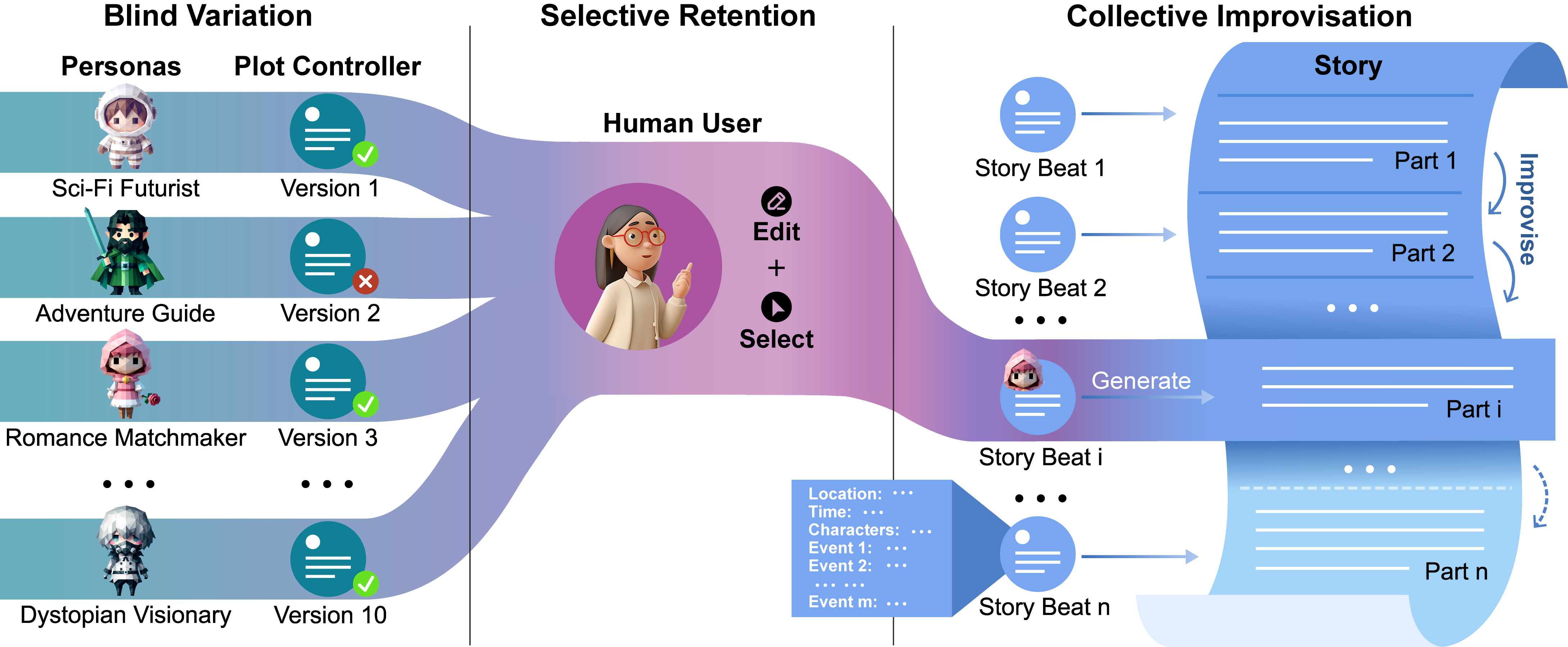

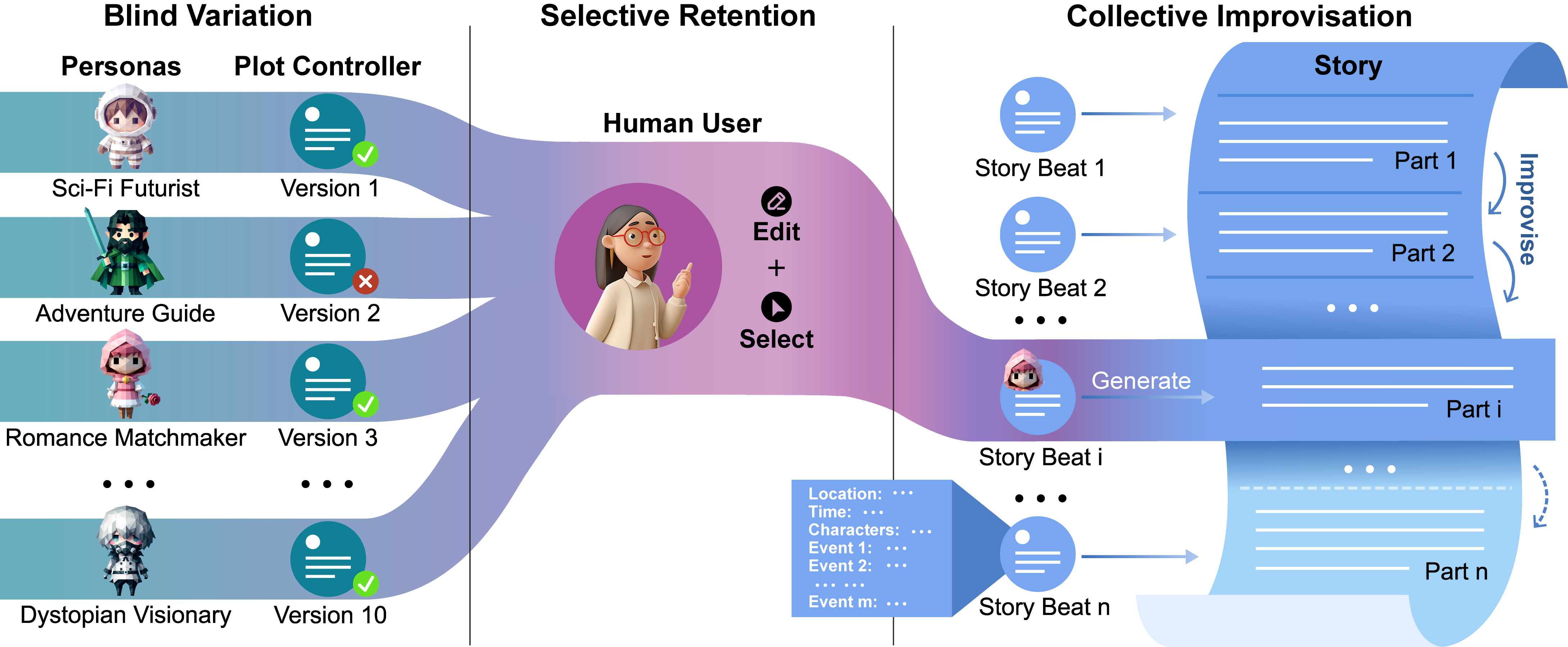

Storytelling, a cornerstone of human culture, thrives on creativity—a domain where modern AI systems often falter. While these systems excel at maintaining narrative coherence, they frequently produce technically sound yet predictable narratives. To address this gap, we introduce Improviser, a collaborative system grounded in psychological theories of creativity, specifically the BVSR framework. IMPROVISER leverages multiple AI personas, each embodying distinct narrative styles, to generate diverse story variations. Users iteratively refine these ideas through selective retention, balancing novelty, coherence, and personalization. In controlled experiments, Improviser outperformed single-persona and chatbot-based systems on creativity and diversity, producing longer, more spatially rich stories without compromising readability. These results empirically validate BVSR's role in computational creativity and establish a framework for human-AI co-creation, demonstrating how AI can amplify—rather than replace—human creative potential.

tl;dr: We introduce NarrativeLoom, a BVSR-inspired multi-persona co-creative system that enables writers to explore diverse narrative possibilities while retaining human creative control, resulting in significantly more creative stories than single-voice AI tools.

|

|

Probing and Inducing Combinational Creativity in Vision-Language Models

Yongqian Peng*,

Yuxi Ma*,

Mengmeng Wang,

Yuxuan Wang,

Yizhou Wang,

Chi Zhang,

Yixin Zhu✉️,

Zilong Zheng✉️

In CogSci, 2025 (Talk)

[Abs]

[Paper]

[Slides]

[arXiv]

[Project]

[Code]

[Data]

[Video]

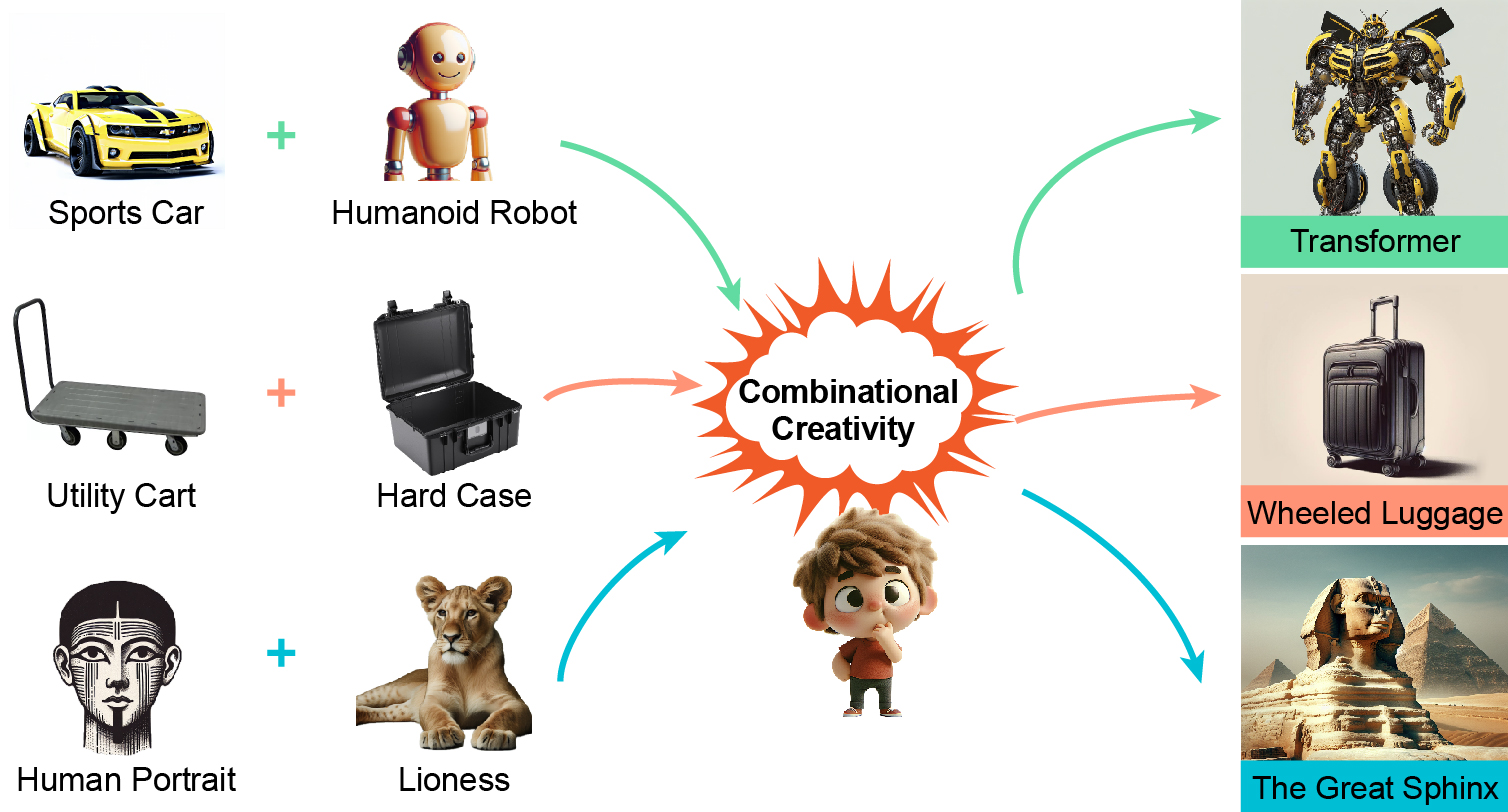

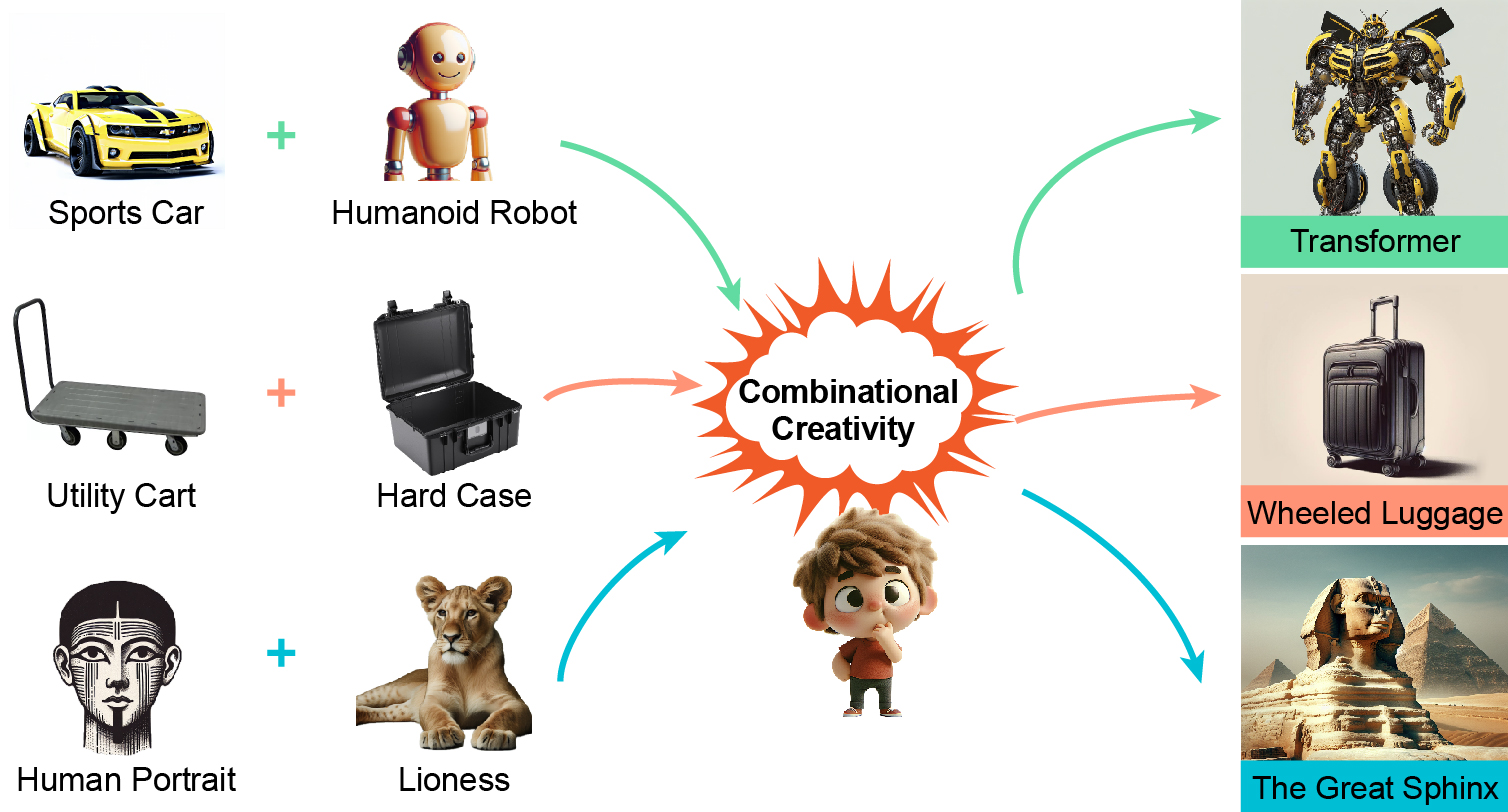

The ability to combine existing concepts into novel ideas stands as a fundamental hallmark of human intelligence. Recent advances in Vision-Language Models (VLMs) like GPT-4V and DALLE-3 have sparked debate about whether their outputs reflect combinational creativity—defined by M. A. Boden (1998) as synthesizing novel ideas through combining existing concepts—or sophisticated pattern matching of training data. Drawing inspiration from cognitive science, we investigate the combinational creativity of VLMs from the lens of concept blending. We propose the Identification-Explanation-Implication (IEI) framework, which decomposes creative processes into three levels: identifying input spaces, extracting shared attributes, and deriving novel semantic implications. To validate this framework, we curate CreativeMashup, a high-quality dataset of 666 artist-generated visual mashups annotated according to the IEI framework. Through extensive experiments, we demonstrate that in comprehension tasks, best VLMs have surpassed average human performance while falling short of expert-level understanding; in generation tasks, incorporating our IEI framework into the generation pipeline significantly enhances the creative quality of VLMs outputs. Our findings establish both a theoretical foundation for evaluating artificial creativity and practical guidelines for improving creative generation in VLMs.

tl;dr: We systematically investigate the combinational creativity of VLMs through the lens of conceptual blending, proposing a novel framework and dataset to evaluate their comprehension and enhance their creative generation capabilities.

|

|

Word Embeddings Track Social Group Changes Across 70 Years in China

Yuxi Ma,

Yongqian Peng,

Yixin Zhu✉️

In CogSci, 2025

[Abs]

[Paper]

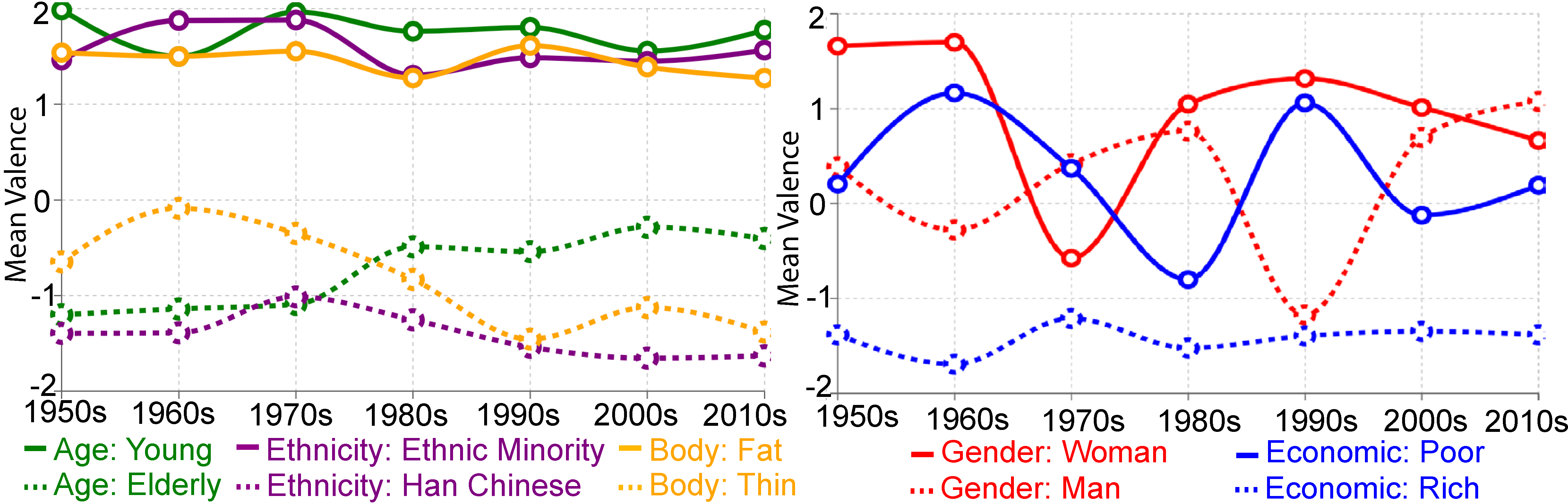

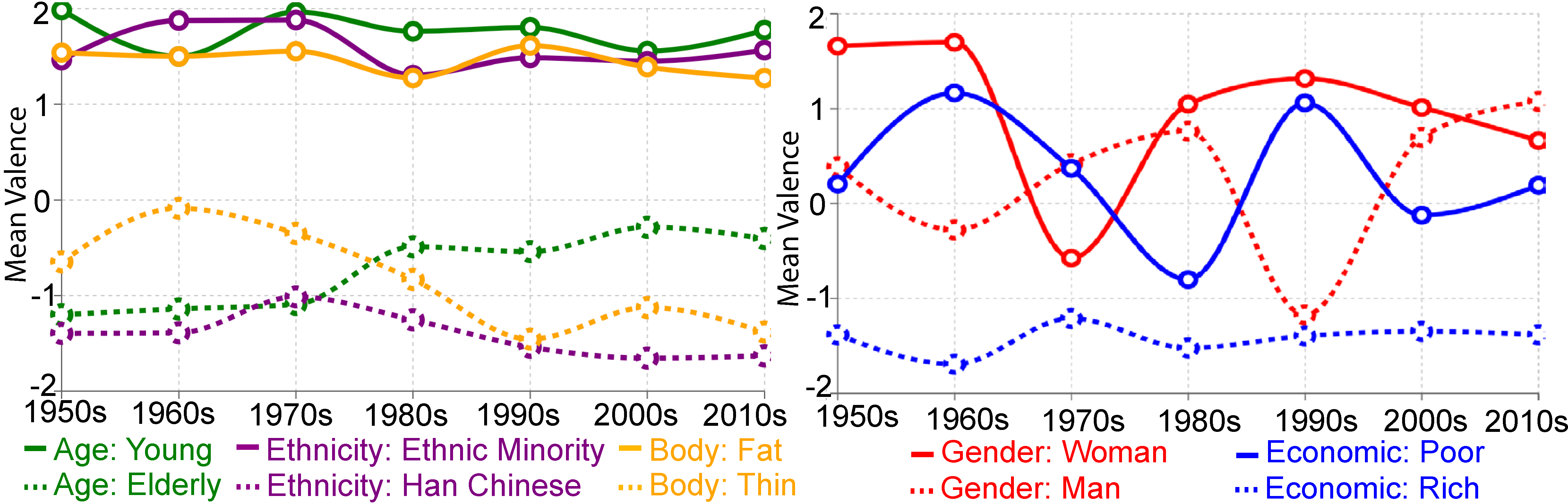

Language encodes societal beliefs about social groups through word patterns. While computational methods like word embeddings enable quantitative analysis of these patterns, studies have primarily examined gradual shifts in Western contexts. We present the first large-scale computational analysis of Chinese state-controlled media (1950-2019) to examine how revolutionary social transformations are reflected in official linguistic representations of social groups. Using diachronic word embeddings at multiple temporal resolutions, we find that Chinese representations differ significantly from Western counterparts, particularly regarding economic status, ethnicity, and gender. These representations show distinct evolutionary dynamics: while stereotypes of ethnicity, age, and body type remain remarkably stable across political upheavals, representations of gender and economic classes undergo dramatic shifts tracking historical transformations. This work advances our understanding of how officially sanctioned discourse encodes social structure through language while highlighting the importance of non-Western perspectives in computational social science.

tl;dr: Using 70 years of Chinese official text, we show that some social stereotypes remain remarkably stable, while gender and class representations are rapidly rewritten during political and economic upheavals—revealing language as both a mirror of society and a site of power.

|

|

Cognitive Reasoning (CoRe) Lab, Institute for Artificial Intelligence, Peking University, China

July 2023 - Present

Student Researcher

Advisor: Prof. Yixin Zhu

|

|

Peking University, China

Aug 2021 - Present

Undergraduate Student

|

|